Fake reading and readicide have been well documented as the enemies of English teachers everywhere. The workshop model does a nice job of thwarting each by offering students choice and ownership over their reading lives. In a previous post Shana suggested that if the reading is authentic and student-centered that it can even be independent from grades. Finding the balance of autonomy and accountability is still a challenge, though–how do we turn students loose to explore books while still gathering evidence of their mastery of the reading standards?

This year I resolved to rely less on quizzes or study guides that are averaged into a grade as a way to solve this dilemma. The last few years I’ve been moving more toward a combination of one-on-one conferencing and informal reading check-ins that gave students space to respond to what they’re reading while also demonstrating some skill mastery. This year I decided that I would experiment with reading portfolios in my junior English classes and ask students to gather evidence of their reading in one place that would comprise a quarterly reading grade. It is a more holistic approach to considering their reading work. This is the rough progression we’ve followed:

Goalsetting

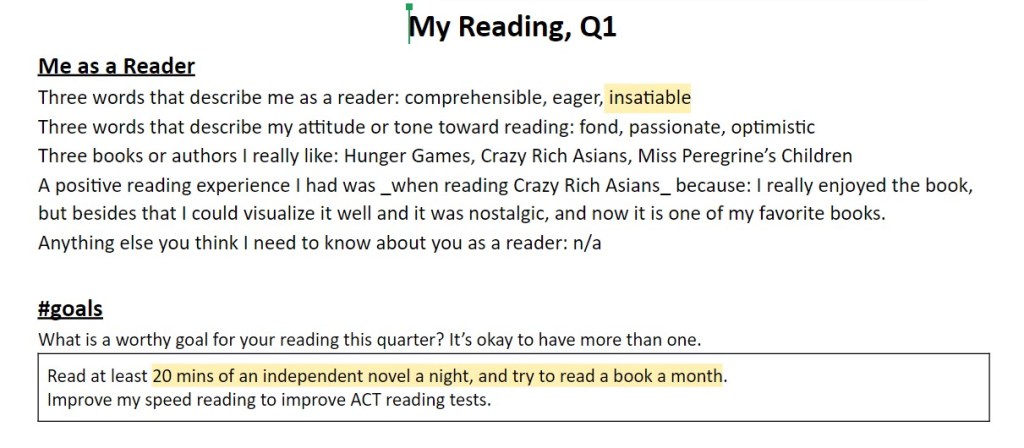

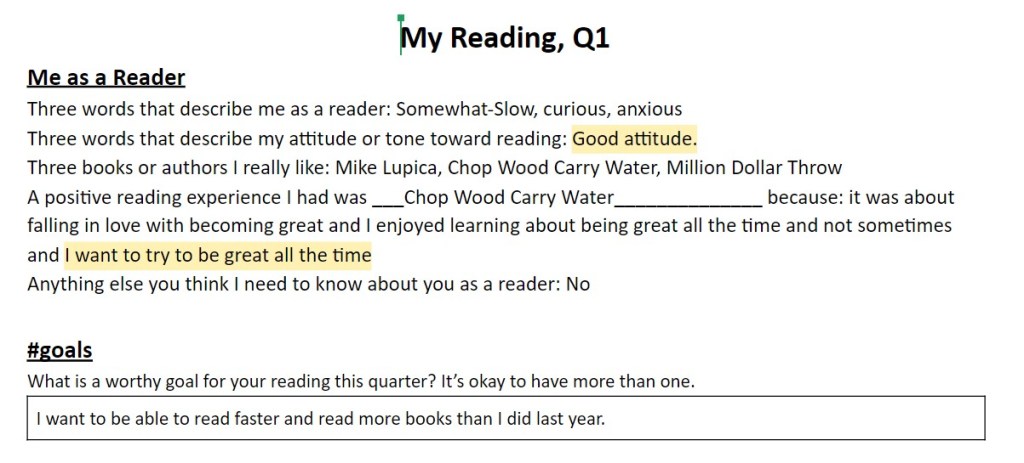

At the top of our collection doc I asked students to consider their reading lives and to set a goal for that quarter. You can see a quick example here:

Delineate the types of reading

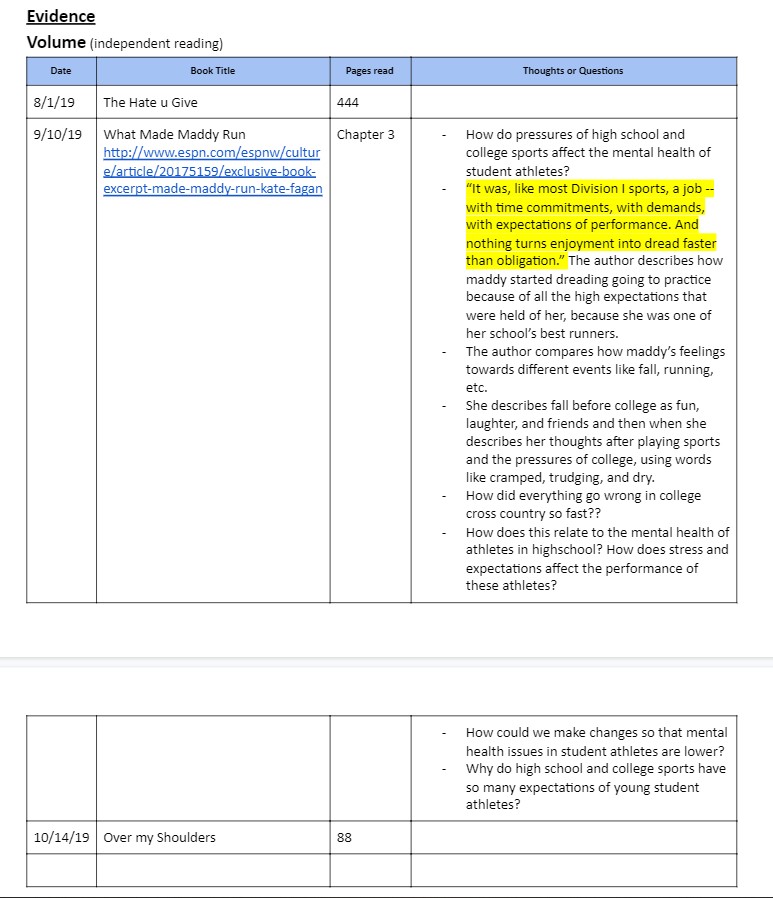

- Volume (independent reading, pleasure reading) skill focus: development of ideas and themes

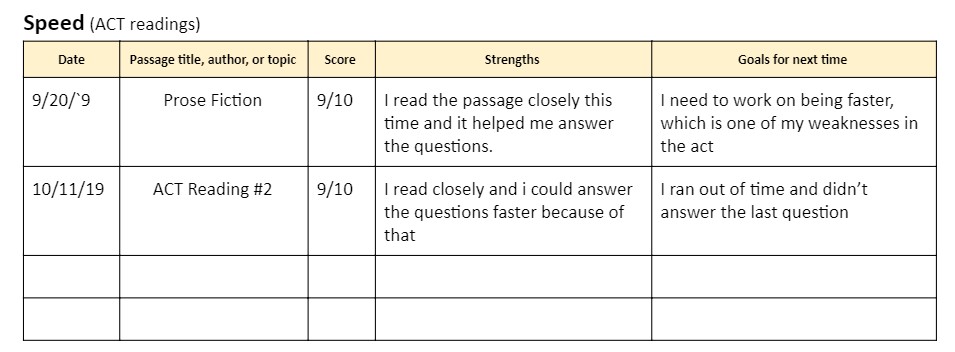

- Speed (for ACT-type scenarios) skill focus: comprehension

- Depth (close reading, annotations, classroom discussions, etc.) skill focus: comprehension, style analysis

Each type of reading requires something different from readers. The task was to find good evidence of each type from each unit. This allowed students to choose our reading check-ins, pieces we annotated or discussed together, or to build other ways to interact with their independent reading. The goal was to learn what strategies make sense for each type of reading that we do and to develop strategies for annotating short works versus tracking information in longer works versus reading to find test answers.

Gather artifacts and experiences

Once we understood the different types, I was able to better organize classtime to meet those goals. Our reading workshop time was mainly spent on volume, but occasionally we’d do a check-in that asked students to reflect on their books that they could use as evidence of depth.

For speed we would periodically test our comprehension using ACT or AP Comp/AP Lit practice passages. We simulated the pressure of time and discussed test-taking strategies, test-making strategies, and what it means to read a short text with rigor. I never counted these as actual scores, only as experiences they needed to complete. This took some pressure off and enabled them to engage with learning how to learn.

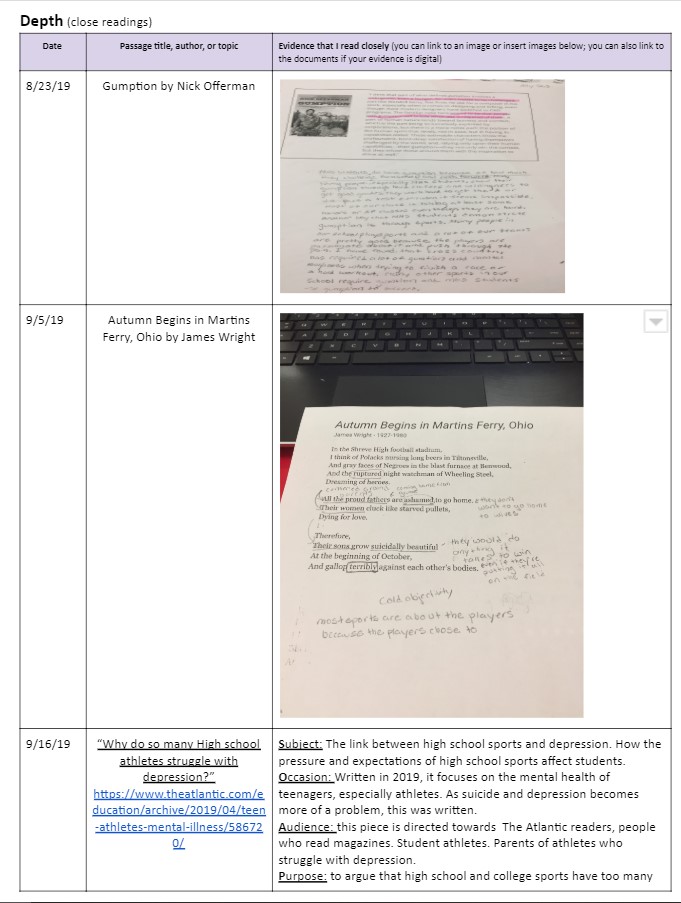

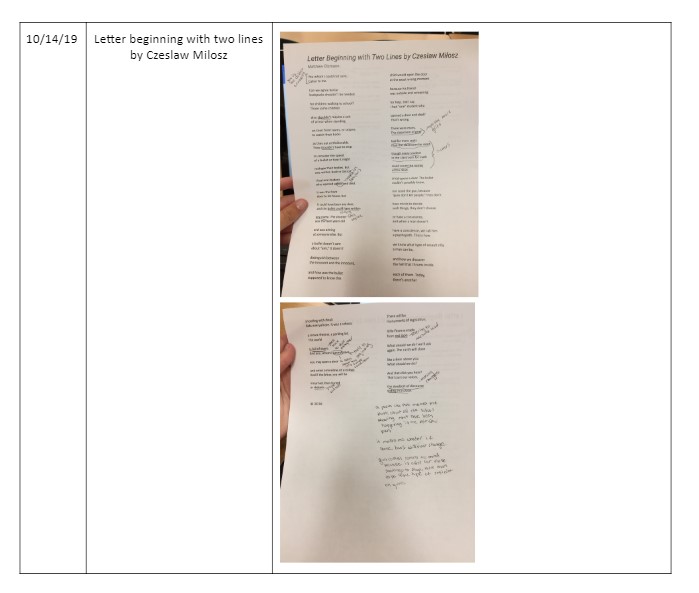

Finally, when we read poems, articles, or other short texts together as a class I always point out that if they choose to annotate or reflect on the piece that they can use it as a piece of evidence for depth. Most will take me up on it. This gives some choice and ownership over the annotation tasks instead of me requiring post-it notes on every chapter of Gatsby. In reality I can tell from one or two artifacts whether or not a student is actively engaging the text in effective ways. You can see a few images below of how one student collected the artifacts:

Discuss quality of the artifacts

Because I didn’t want the portfolio to simply be a completion grade we tried to attach some traits to strong reading responses, specifically for depth. I essentially trusted what I saw in daily reading workshop times and some informal check-ins for volume, counted the practice tests as completion for speed, and then used depth as the category to focus on assessing. I used an informal rubric that focused on the specificity and complexity of their interactions since those are the two words/skills we’d been focusing on, but you could adapt to the specific traits you’re hoping to capture in their reading work.

The end products are not pretty (Student example from Q1; Student example from Q2)–I’m sure there are better technology solutions to explore–but they do offer me a decent picture of what each individual student is up to as a reader in a way that I wasn’t able to see when I collected and averaged quizzes and study guide questions. It’s improved the vocabulary of our discussion about tasks. And ultimately it has helped continue the shift of ownership over their reading life from me to them, which is the end goal of workshop.

Nathan Coates teaches junior English at Mason High School, a large suburban district near Cincinnati, Ohio. He is excited to start reading the final installment of the Wolf Hall trilogy.

What are you thinking?